| Tandem Project Leader | Mathias Krause |

| NHR@KIT Project Leader | Martin Frank |

| Project Coordinator | René Caspart |

| Team | SSPE |

| Researcher | Adrian Kummerländer |

| Open Source Software | OpenLB |

Introduction

Lattice Boltzmann Methods (LBM) are an established mesoscopic approach for simulating a wide variety of transport phenomena [1]. While they are uniquely suited to HPC applications, obtaining maximum performance across different targets, collision models and boundary conditions is an active topic of research.

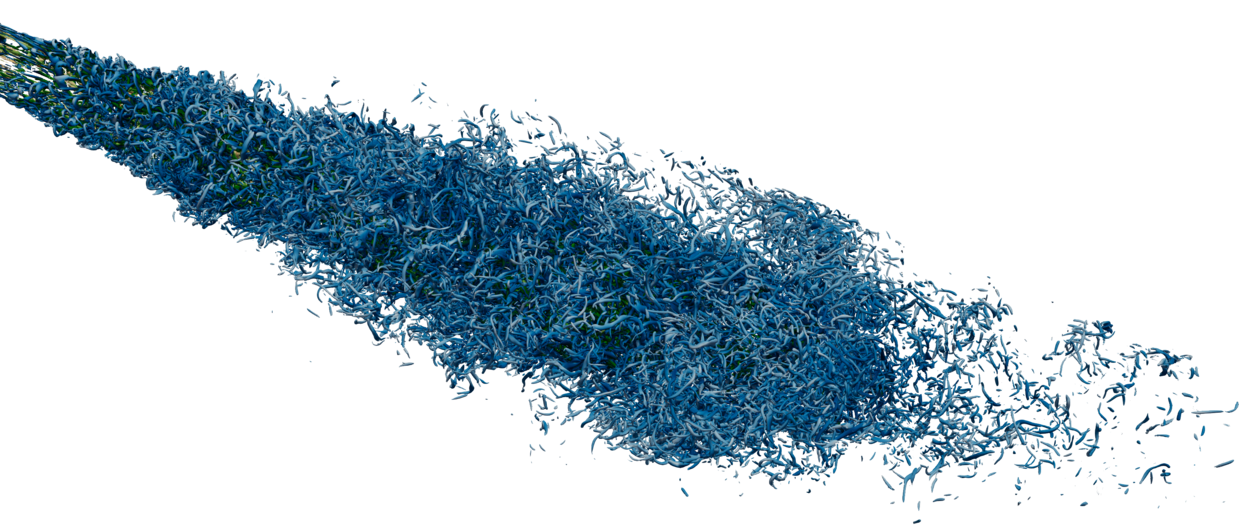

Figure: Q-Criterion of a turbulent nozzle flow resolved by 2.5 billion cells distributed to 120 GPUs on HoreKa

© Adrian Kummerländer

Project description

The principal goal of the project is to enable the utilization of arbitrary heterogeneous target hardware in the existing OpenLB software framework [2]. Specifically we want to support execution on GPGPUs and SIMD CPUs. Secondary goals include the further development of OpenLB’s architecture in order to incorporate support for adaptive refinement as a model-based performance optimization.

All of these goals are planned to be approached in a sustainable fashion, ensuring the continued reproducibility and reliability of OpenLB as one of the major open source LBM codes.

As a first step towards this, a new algorithm for implementing the LBM streaming step with very good bandwidth-relative performance on both CPU and GPU targets was developed [3]. This Periodic Shift (PS) pattern was produced by considering implicit propagation as a transformation of the memory bijection. Virtual memory manipulation is used on both CPU and GPU targets to efficiently implement these transformations.

Based on this development, the first release of OpenLB with support for using both vectorization on CPUs and GPGPUs was published [4] in early 2022. Its parallel efficiency was evaluated [5] in scalability tests on up to 320 CPU-only resp. 128 GPU accelerated nodes of the HoreKa supercomputer, yielding weak efficiencies up to 1.01 and strong efficiencies up to 0.96 as well as enabling up to 1.33 trillion cell updates per second on meshes containing up to 18 billion cells.

References

[1] M. J. Krause, A. Kummerländer, and S. Simonis. "Fluid Flow Simulation with Lattice Boltzmann Methods on High Performance Computers." NASA AMS Seminar Series. 2020.

[2] M. J. Krause, A. Kummerländer, S. J. Avis, H. Kusumaatmaja, D. Dapelo, F. Klemens, M. Gaedtke, N. Hafen, A. Mink, R. Trunk, J. E. Marquardt, M.-L. Maier, M. Haussmann, and S. Simonis . "OpenLB-Open source lattice Boltzmann code." In Computers & Mathematics with Applications. 2021. doi: 10.1016/j.camwa.2020.04.033.

[3] A. Kummerländer, M. Dorn, M. Frank, and M. J. Krause. "Implicit Propagation of Directly Addressed Grids in Lattice Boltzmann Methods." In Concurrency and Computation: Practice and Experience. 2022. doi: 10.1002/cpe.7509.

[4] A. Kummerländer, S. Avis, H. Kusumaatmaja, F. Bukreev, D. Dapelo, S. Großmann, N. Hafen, C. Holeksa, A. Husfeldt, J. Jeßberger, L. Kronberg, J. Marquardt, J. Mödl, J. Nguyen, T. Pertzel, S. Simonis, L. Springmann, N. Suntoyo, D. Teutscher, M. Zhong, and M.J. Krause. "OpenLB Release 1.5: Open Source Lattice Boltzmann Code." Version 1.5. 2022. doi: 10.5281/zenodo.6469606.

[5] A. Kummerländer, F. Bukreev, S. Berg, M. Dorn, and M.J. Krause. "Advances in Computational Process Engineering using Lattice Boltzmann Methods on High Performance Computers." In: High Performance Computing in Science and Engineering ' 21.